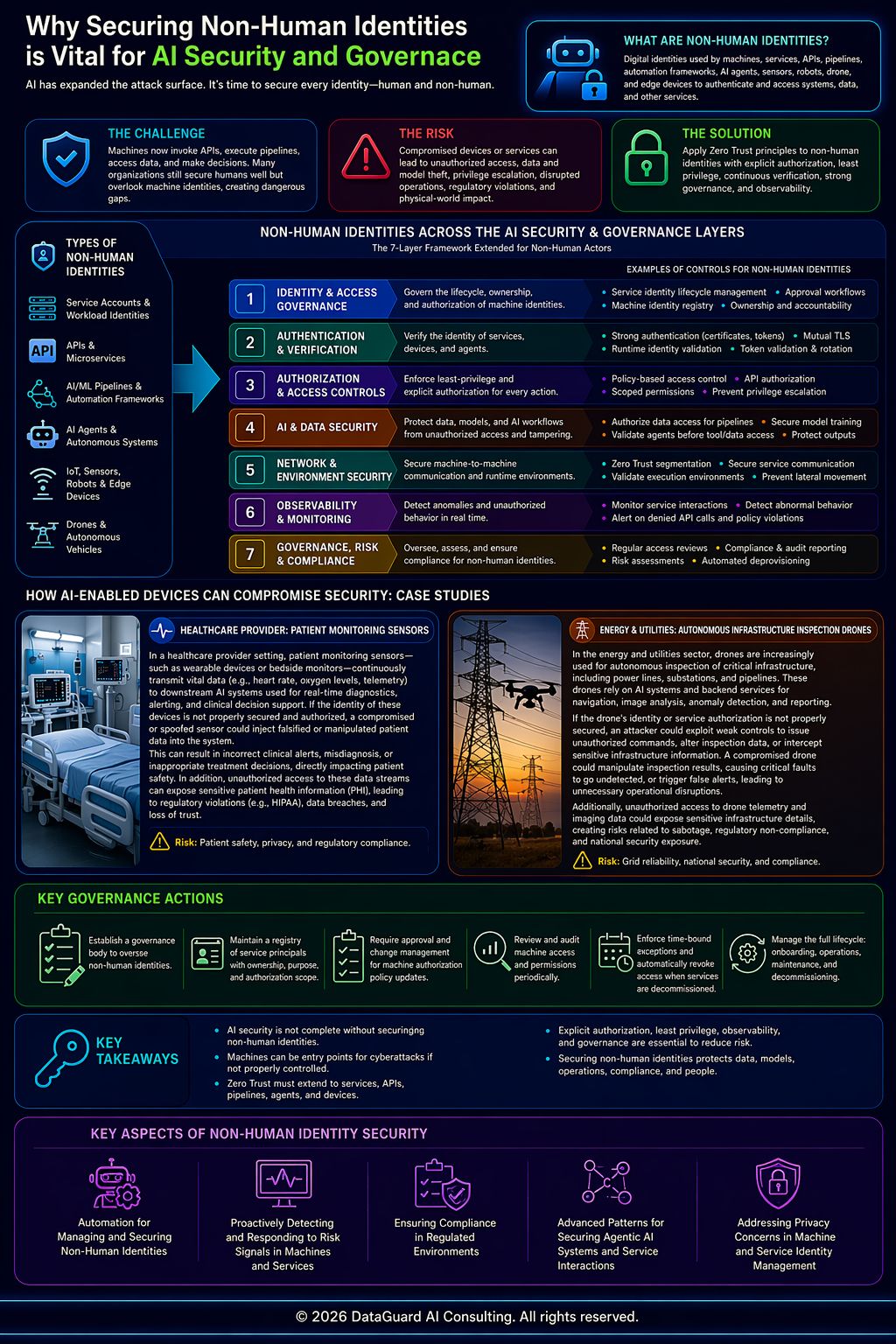

No identity is trusted—secure every machine, service, and agent across the AI ecosystem.

Non-Human Identities: The Hidden Layer of AI Risk

This article extends the ‘7 Layers of AI Security & Governance © Framework.’

The rapid expansion of AI has led to an explosion in the use of non-human entities—including services, APIs, pipelines, automation frameworks, AI agents, and a growing ecosystem of machines such as sensors, robots, drones, and edge devices.

Non-human identities are increasingly becoming one of the largest—and least understood—attack surfaces in AI systems.

These systems are no longer passive components. They actively:

invoke APIs

execute workflows

retrieve and process data

interact with other services and AI systems

As a result, they must be monitored, authorized, and secured with the same rigor as human users.

If left exposed, these entities can become entry points for cyberattacks, enabling:

unauthorized API invocation

uncontrolled pipeline execution

privilege escalation across services

data and model exfiltration

Despite this, many organizations still treat non-human identities as an afterthought, resulting in significant gaps in security and governance.

In modern AI environments, it is no longer sufficient to secure only human access. Non-human identities must be explicitly governed, authorized, and controlled across all systems and workflows.

Core Objectives

The objective of securing non-human identities is to:

enforce explicit authorization boundaries for machine and service identities

ensure service-to-service interactions are governed by defined policies

establish scoped privileges and least-privilege access for all machine actors

maintain verifiable identity context for machines, services, and agents

eliminate implicit trust relationships between systems and workflows

prevent unauthorized privilege escalation across service boundaries

ensure that automated workflows operate within strictly defined authorization scopes

What This Means in AI Systems

In AI systems, securing non-human identities requires:

service identity provisioning and lifecycle management across APIs, pipelines, and agents

runtime authorization checks enforced through gateways, orchestration layers, and execution environments

policy-based access control for machine actors, evaluated dynamically at runtime

governance mechanisms to ensure machine principals themselves are authorized and controlled

When these controls are absent or misconfigured, systems can establish uncontrolled service-to-service trust, leading to:

unauthorized API access

uncontrolled pipeline execution

agent privilege escalation

cross-system token propagation

systemic compromise across distributed environments

Non-human identities are now a primary attack surface. Subscribe for practical frameworks and strategies to secure AI systems, enforce Zero Trust, and govern machine-driven workflows.

How AI-Enabled Devices Can Compromise Security: Case Studies

AI-enabled machines and non-human identities can introduce significant security and governance risks when not properly controlled. The following examples illustrate how these risks manifest in real-world environments.

Healthcare Provider Environment (Patient Monitoring Sensors)

In a healthcare provider setting, patient monitoring sensors—such as wearable devices or bedside monitors—continuously transmit vital data (e.g., heart rate, oxygen levels, telemetry) to downstream AI systems used for real-time diagnostics, alerting, and clinical decision support. If the identity of these devices is not properly secured and authorized, a compromised or spoofed sensor could inject falsified or manipulated patient data into the system.

This can result in incorrect clinical alerts, misdiagnosis, or inappropriate treatment decisions, directly impacting patient safety. In addition, unauthorized access to these data streams can expose sensitive patient health information (PHI), leading to regulatory violations (e.g., HIPAA), data breaches, and loss of trust. Without strong identity validation and policy-based authorization, such devices become high-risk entry points into critical healthcare AI and data ecosystems.

Energy & Utilities (Autonomous Infrastructure Inspection Drones)

In the energy and utilities sector, drones are increasingly used for autonomous inspection of critical infrastructure, including power lines, substations, and pipelines. These drones rely on AI systems and backend services for navigation, image analysis, anomaly detection, and reporting.

If the drone’s identity or service authorization is not properly secured, an attacker could exploit weak controls to issue unauthorized commands, alter inspection data, or intercept sensitive infrastructure information. For example, a compromised drone could manipulate inspection results, causing critical faults to go undetected, or trigger false alerts, leading to unnecessary operational disruptions.

Additionally, unauthorized access to drone telemetry and imaging data could expose sensitive infrastructure details, creating risks related to sabotage, regulatory non-compliance, and national security exposure. Without strict identity validation and policy-based authorization, such drones can become high-impact attack vectors within critical infrastructure ecosystems.

These examples highlight how non-human identities, if not properly governed, can quickly become high-impact attack vectors, reinforcing the need for Zero Trust principles of continuous verification, explicit authorization, and elimination of implicit trust across execution paths.

Key Shift

Traditional systems:

Security is primarily focused on human identities, with access controls designed around users and roles

AI-enabled systems:

Security must extend to non-human identities, including services, pipelines, and autonomous agents operating across distributed systems

This shift requires enforcing authorization at every stage of execution, including:

AI model training pipelines validating service authorization before accessing datasets and model artifacts

RAG pipelines enforcing authorization before querying vector databases and knowledge sources

agent runtime environments validating authorization before tool execution and API invocation

AI inference services validating calling service identity before model access

monitoring systems detecting abnormal service or agent behavior

pipeline execution halting when authorization checks fail at any stage

AI Governance and Risk Implications

Securing non-human identities introduces critical governance requirements:

establish a governance body responsible for machine identities and service access controls

maintain a central registry of service principals, including ownership, purpose, and authorization scope

enforce change management and approval workflows for machine authorization policies

ensure traceability between machine identities and owning systems or teams

conduct periodic reviews of API, pipeline, and agent authorization policies

enforce time-bound approvals for elevated machine privileges

automatically revoke access for decommissioned services

manage the full lifecycle of non-human identities, from onboarding to decommissioning

Without governance, non-human identities can quickly become:

untracked

over-privileged

high-risk entry points for attackers

Role of Observability

Observability plays a critical role in securing non-human identities by enabling:

real-time monitoring of service-to-service interactions

detection of unauthorized API calls and blocked execution attempts

identification of anomalous behavior in agents and automated workflows

continuous validation of authorization enforcement across execution paths

Without observability, organizations lack the ability to:

detect misuse

identify compromised services

respond to security incidents in real time

Zero Trust Alignment

Securing non-human identities is a core requirement of a Zero Trust architecture.

In a Zero Trust model:

no service, API, or agent is implicitly trusted

all interactions must be explicitly authorized and continuously validated

trust is non-transitive across workflows and execution steps

This ensures that:

service identities cannot propagate unchecked

access decisions are enforced at every boundary

execution remains secure across distributed and agentic systems

Looking Ahead: Deep Dive into Non-Human Identity Security

Future topics will explore:

the role of automation in managing and securing non-human identities

techniques to proactively detect and respond to risk signals across machines and services

approaches to ensuring compliance in regulated environments

advanced patterns for securing agentic AI systems and service interactions

strategies for addressing privacy concerns in machine and service identity management

About the Author

Gopal Wunnava is an enterprise AI architect and founder of DataGuard AI Consulting, specializing in AI security, governance, and large-scale data architecture.

The author is the creator of the “7 Essential Layers of AI Security & Governance” framework and has extensive experience designing and implementing data and AI platforms across large enterprise environments.

He brings enterprise and multi-industry experience across healthcare, financial services, and media, combining consulting experience at Big 4 firms with hands-on, real-world experience at companies such as Amazon and Disney.

His work is grounded in both thought leadership and practical execution, with deep subject matter expertise in data, governance, and AI frameworks. The author is also a certified AI governance professional (AIGP) from the International Association of Privacy Professionals (IAPP), reflecting his focus on responsible AI and governance practices.

His work focuses on helping organizations adopt AI safely, responsibly, and at scale—bridging architecture, governance, and real-world implementation.

Subscribe for upcoming deep dives into each layer of the framework and practical implementation strategies.

© 2026 DataGuard AI Consulting. All rights reserved.

This framework is protected under U.S. copyright law.

Have an AI security or governance use case in mind?

Start with a 15-minute discovery call (free) →

Need deeper, structured guidance? Book a 60-minute strategy session (paid) →

Arnold Schwarzenegger has a newsletter.

Yeah. That Arnold Schwarzenegger.

So do Codie Sanchez, Scott Galloway, Colin & Samir, Shaan Puri, and Jay Shetty. And none of them are doing it for fun. They're doing it because a list you own compounds in ways that social media never will.

beehiiv is where they built it. You can start yours for 30% off your first 3 months with code PLATFORM30. Start building today.