The Access Controls Layer governs who can access what, when, and how across data, systems, and AI workloads through identity, authorization, and policy enforcement.

Introduction

This article is part of the ‘7 Layers of AI Security & Governance © Framework.’

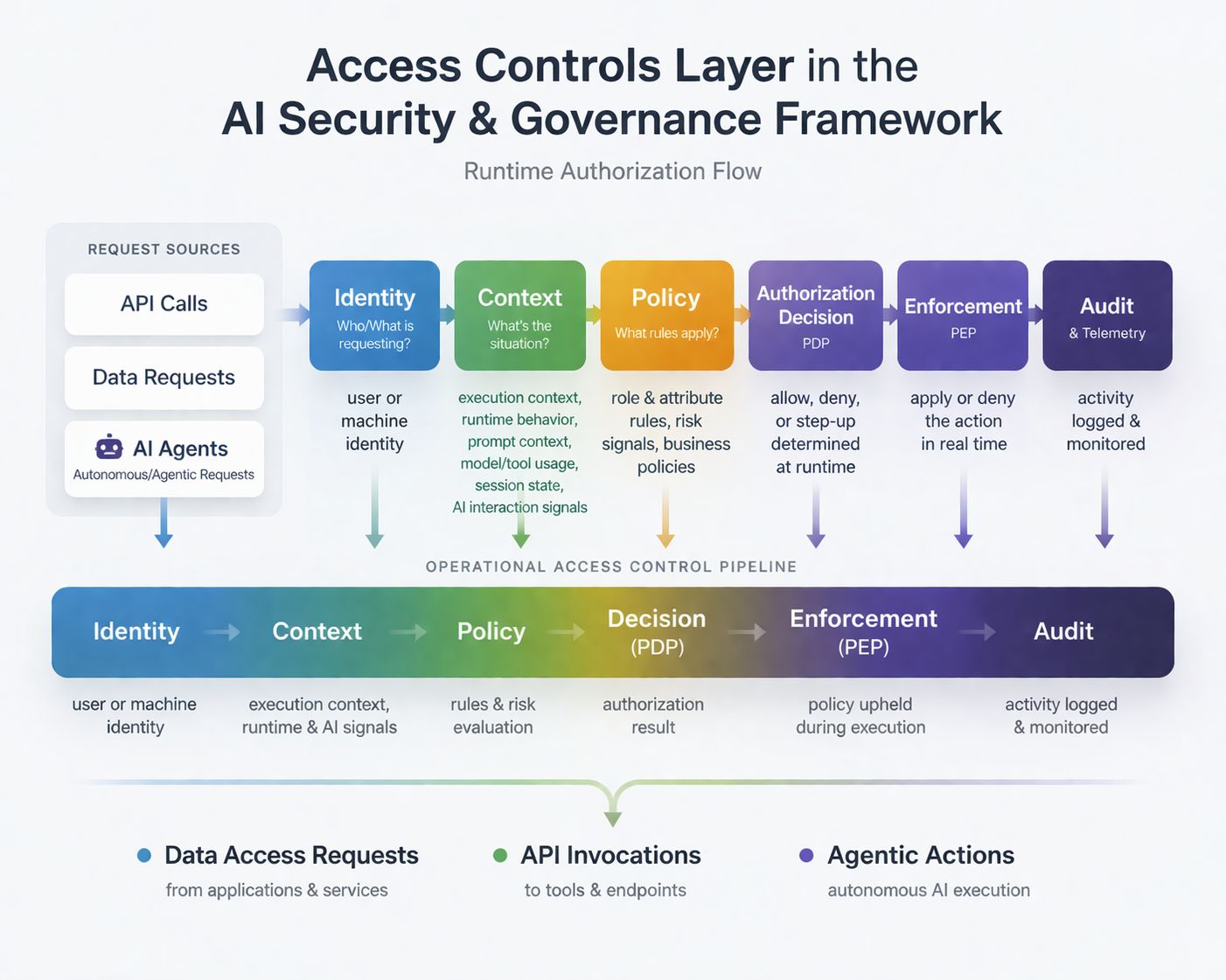

The Access Controls Layer is the control-plane architecture responsible for evaluating, enforcing, and auditing authorization decisions across systems, data, APIs, AI models, and execution environments.

Given a validated identity — whether human, machine, or agent — this layer determines:

what resources may be accessed

what actions may be performed

under what contextual conditions access is permitted

It operates at runtime, applying policy-driven authorization decisions using models such as Role-Based Access Control (RBAC), Attribute-Based Access Control (ABAC), or hybrid approaches.

Enforcement occurs at the point of access and extends across:

row- and column-level data controls

scoped API and service permissions

token governance and delegation boundaries

execution isolation and containment

In modern AI systems, authorization is no longer a static configuration—it is a runtime decisioning function. Every access request—whether initiated by a user, service, or agent—must be evaluated dynamically based on identity, context, policy, and risk signals before execution.

Core Objectives

The core objective of this layer is to ensure:

Least privilege

Context-aware authorization

Deterministic and auditable enforcement

Importantly, this layer consumes authenticated identity signals but remains focused exclusively on authorization and enforcement, maintaining a clear separation between identity verification and access decisioning.

This layer enables:

metadata-driven policy evaluation

dynamic masking or transformation of sensitive data

audit-grade logging and traceability

federated governance models (e.g., data mesh environments)

What This Means in AI Systems

In AI-enabled ecosystems, the Access Controls Layer becomes significantly more critical — and more complex.

While the Data Layer governs how data is structured, protected, and used, the Access Controls Layer governs who or what can interact with that data and under what conditions. This distinction is critical in AI systems, where data access often occurs dynamically at runtime through APIs, agents, and retrieval pipelines.

For example, an AI agent invoking external tools may access multiple systems using delegated tokens. Without proper access controls, these chained actions can unintentionally escalate privileges or access sensitive data beyond intended boundaries.

In agentic AI systems, access decisions may be executed without direct human initiation, requiring controls that govern autonomous and delegated actions.

At a foundational level, this layer ensures that:

The right entities—humans, services, and AI agents—can access the right data and capabilities, at the right time, under the right conditions.

Key Shift

Traditional systems:

Access is user-driven and relatively static

AI-enabled systems:

Access becomes dynamic, delegated, and increasingly autonomous

AI introduces access patterns that traditional models were not designed to handle.

This layer must now govern access across:

model endpoints and inference APIs

tool integrations and external connectors

machine and service identities

token-based delegation and chained execution flows

agent-initiated actions across systems

These introduce new risks, including:

privilege escalation through chained workflows

token leakage and misuse

over-permissioned agents and services

unintended or autonomous actions beyond defined boundaries

token propagation across chained workflows, where access rights may unintentionally expand across systems

As a result, access control can no longer rely solely on static roles — it must become:

Context-aware

Policy-driven

Dynamically enforceable

Continuously auditable

Context-aware across identity, execution environment, and runtime behavior

Governance and Risk Implications

The Access Controls Layer plays a central role in reducing both operational and regulatory risk.

It ensures that:

access is explicitly defined and enforceable

permissions are constrained to least privilege

all access activity is traceable and auditable

sensitive data and AI capabilities are protected from misuse

At the same time, it enables organizations to:

safely democratize access to data and AI

support distributed operating models (e.g., data mesh)

maintain governance consistency across platforms

anticipate emerging risks from automation and agentic AI systems

Ultimately, this layer acts as the enforcement backbone of AI governance, translating policies and intent into real-time, runtime controls

Key AI Governance Tenets (Access Controls Layer)

Continuous assurance

Context-aware, policy-aligned access enforcement

Automated evidence generation

These tenets define how access control must evolve in AI-enabled systems.

Continuous assurance ensures that access decisions are not evaluated once, but continuously enforced and validated across identities, actions, and execution contexts. This includes real-time monitoring of access patterns, detection of anomalies and privilege escalation, and consistent enforcement across APIs, data platforms, AI pipelines, and agent workflows.

Context-aware and policy-aligned access enforcement ensures that authorization decisions are dynamically governed by policy — not just at initiation, but throughout execution. This includes enforcing fine-grained controls across model inference, RAG pipelines, and agent actions, while adapting access decisions based on runtime context, behavior, and evolving risk signals.

Automated evidence generation ensures that all access decisions, enforcement actions, and violations are fully traceable and auditable. This includes real-time logging, continuous compliance validation, and the ability to generate audit-ready evidence without manual intervention.

As organizations mature, these tenets evolve into adaptive, closed-loop access control systems—where authorization decisions continuously adjust based on context and behavior, enforcement is validated across distributed environments, and anomalies trigger automated remediation and policy refinement.

In practice, implementing effective access control requires structured policy models, enforcement patterns, and governance approaches that operate consistently across systems, data platforms, APIs, and AI workflows.

Looking Ahead: Deep Dive into Access Control Patterns

Each of these areas introduces deeper architectural and governance considerations — from machine identity and authorization models to autonomy controls in agentic systems — which we will explore in greater detail through dedicated deep-dive articles.

About the Author

Gopal Wunnava is an enterprise AI architect and founder of DataGuard AI Consulting, specializing in AI security, governance, and large-scale data architecture.

The author is the creator of the “7 Essential Layers of AI Security & Governance” framework and has extensive experience designing and implementing data and AI platforms across large enterprise environments.

He brings enterprise and multi-industry experience across healthcare, financial services, and media, combining consulting experience at Big 4 firms with hands-on, real-world experience at companies such as Amazon and Disney.

His work is grounded in both thought leadership and practical execution, with deep subject matter expertise in data, governance, and AI frameworks. The author is also a certified AI governance professional (AIGP) from the International Association of Privacy Professionals (IAPP), reflecting his focus on responsible AI and governance practices.

His work focuses on helping organizations adopt AI safely, responsibly, and at scale—bridging architecture, governance, and real-world implementation.

Subscribe for upcoming deep dives into each layer of the framework and practical implementation strategies.

© 2026 DataGuard AI Consulting. All rights reserved.

This framework is protected under U.S. copyright law.